CAIPO only works if users say yes to always-on listening. Most didn't.

Role

Lead Product Designer

Led end-to-end design from problem definition to shipped solution.

Scope

0→1 · End-to-end

Timeline

15 weeks

Team

Mobile · Hardware · AI

Problem

Users understood what it did, but didn't trust always-on listening enough to enable it.

Insight

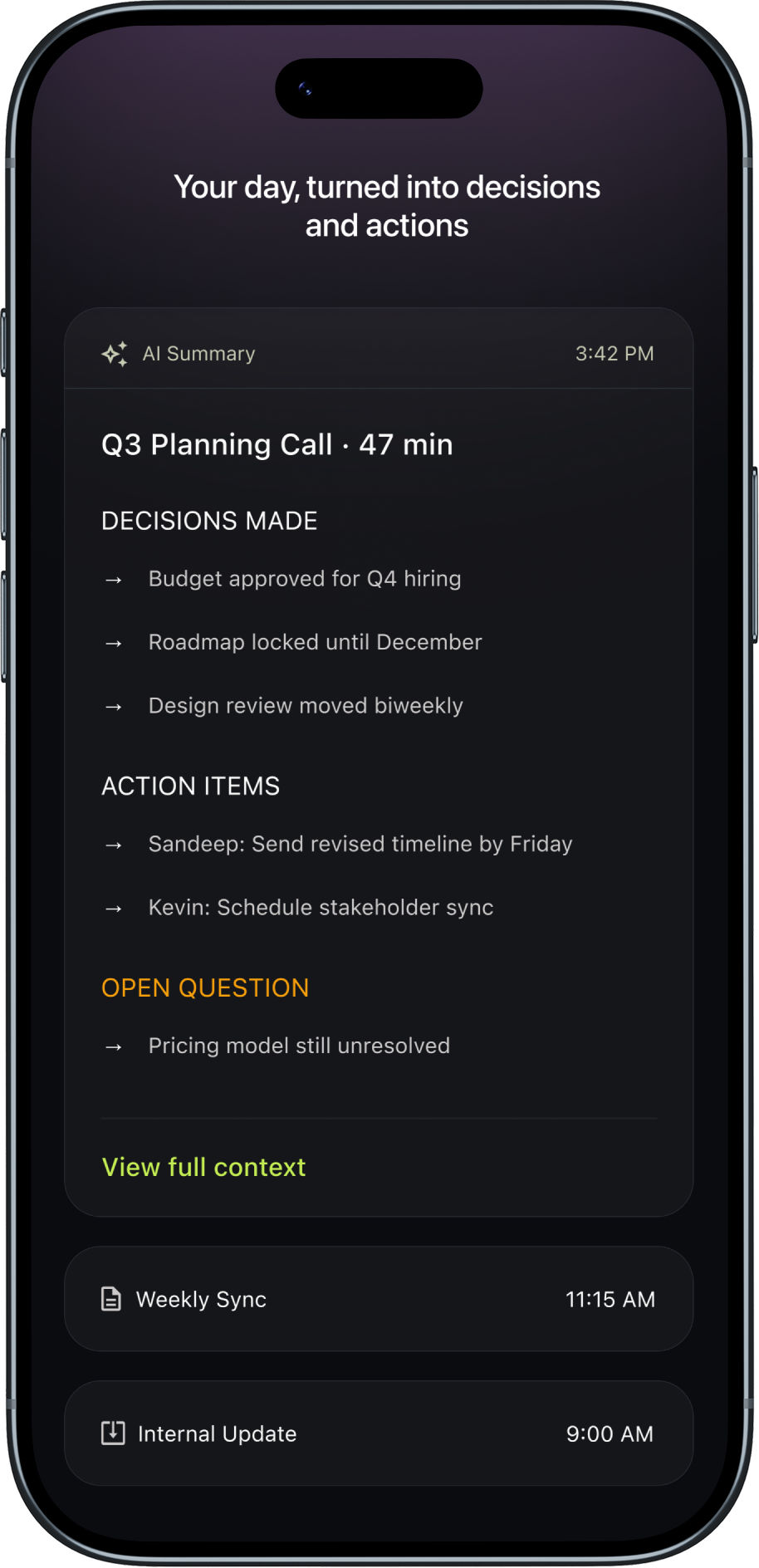

Users needed to see value before granting permission.

What I Did

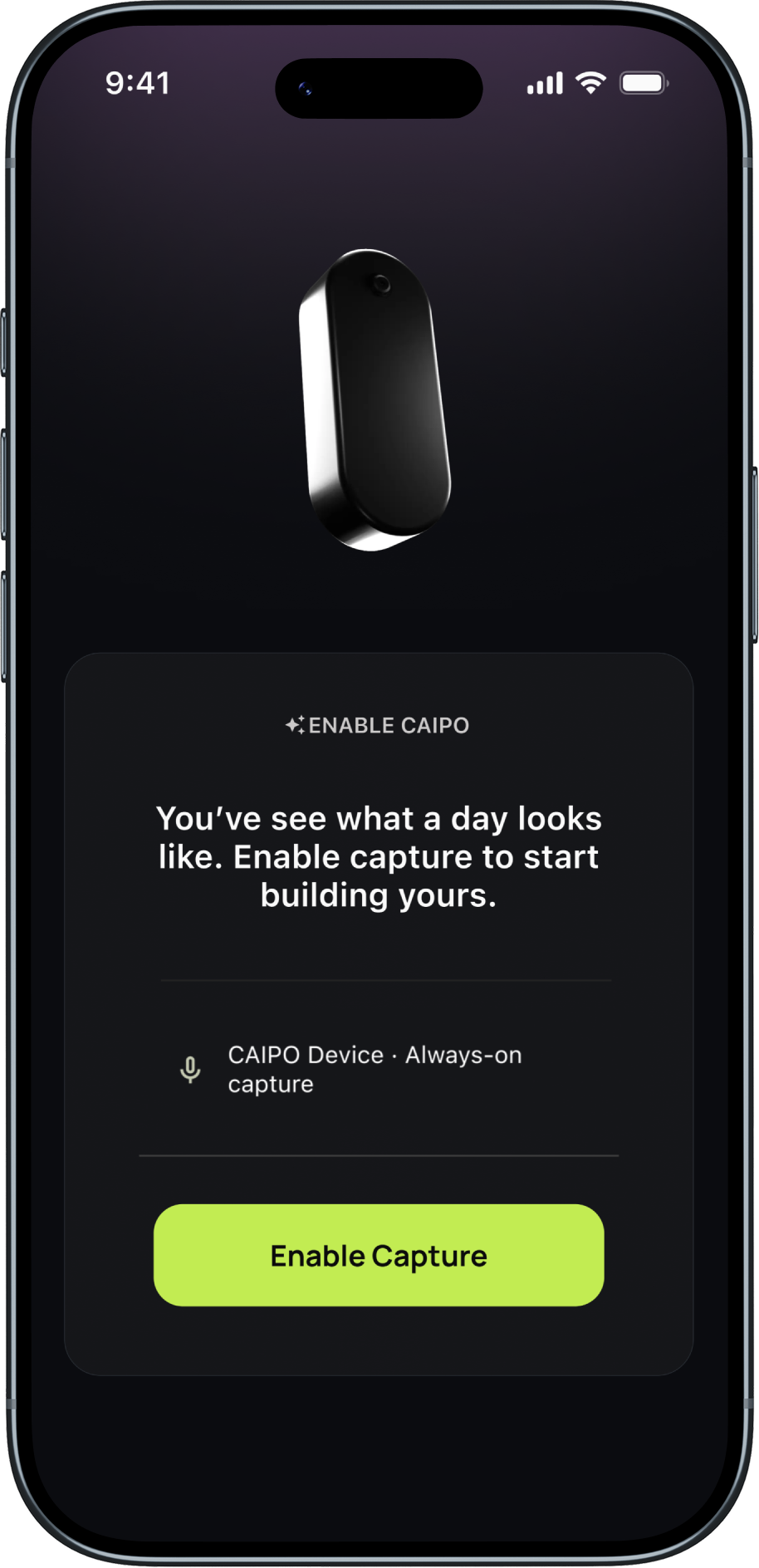

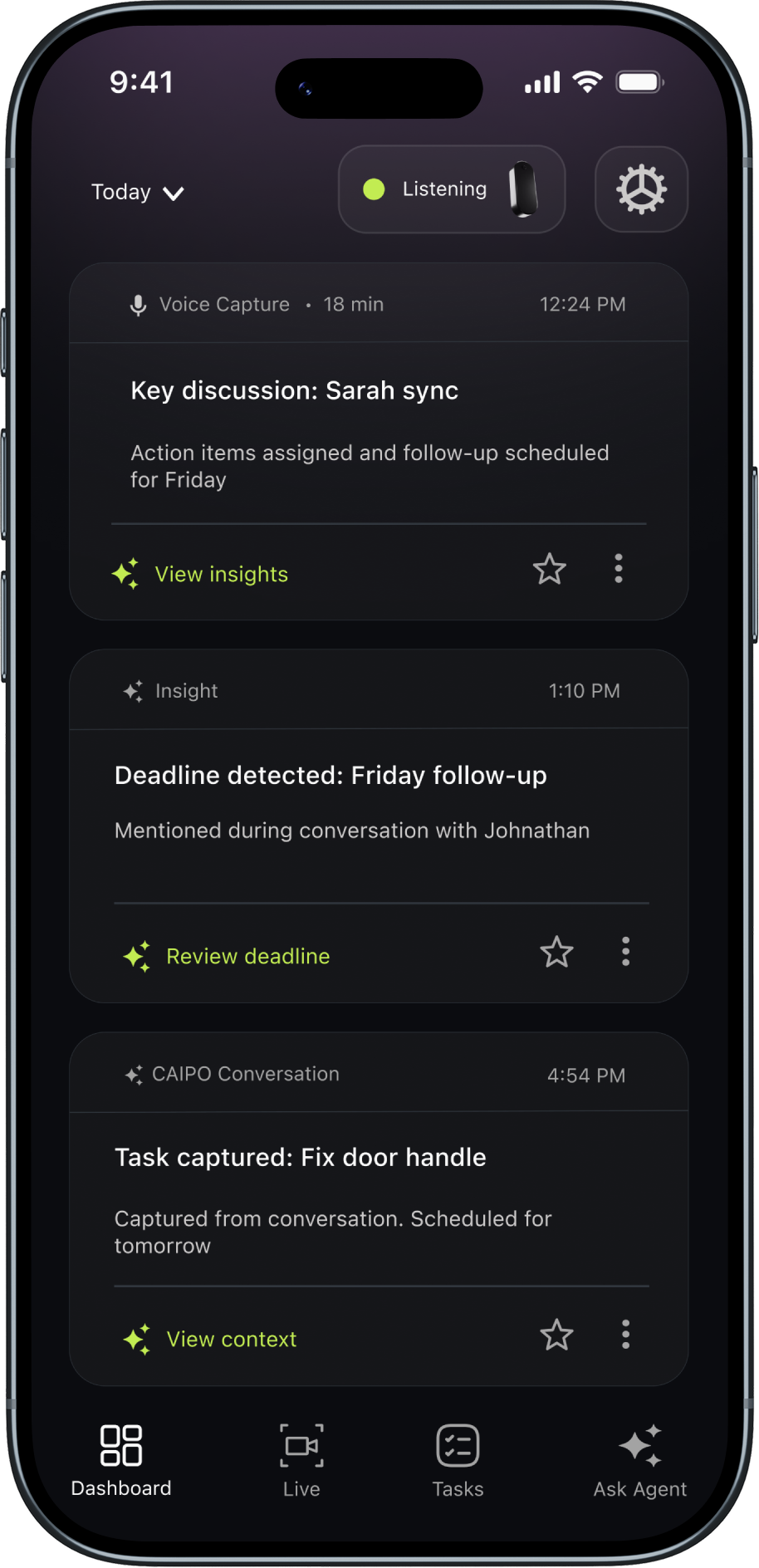

Showed value first. Kept listening status visible. Made pause one tap.

Principle

Optimized for progressive trust-building instead of upfront consent.

Reduced solution to 3 core trust-building decisions

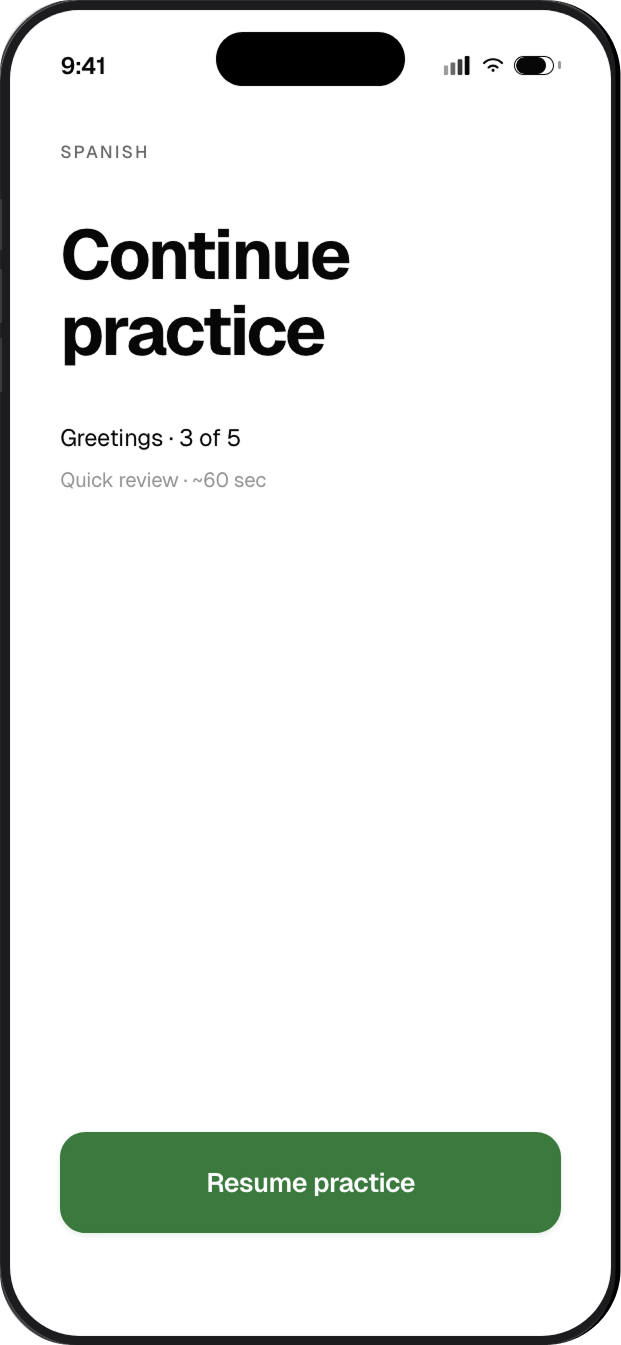

01 · Earn trustValue demo

01 · Earn trustValue demo 01 · Earn trustConsent after preview

01 · Earn trustConsent after preview 02 · Maintain trustPersistent capture chip

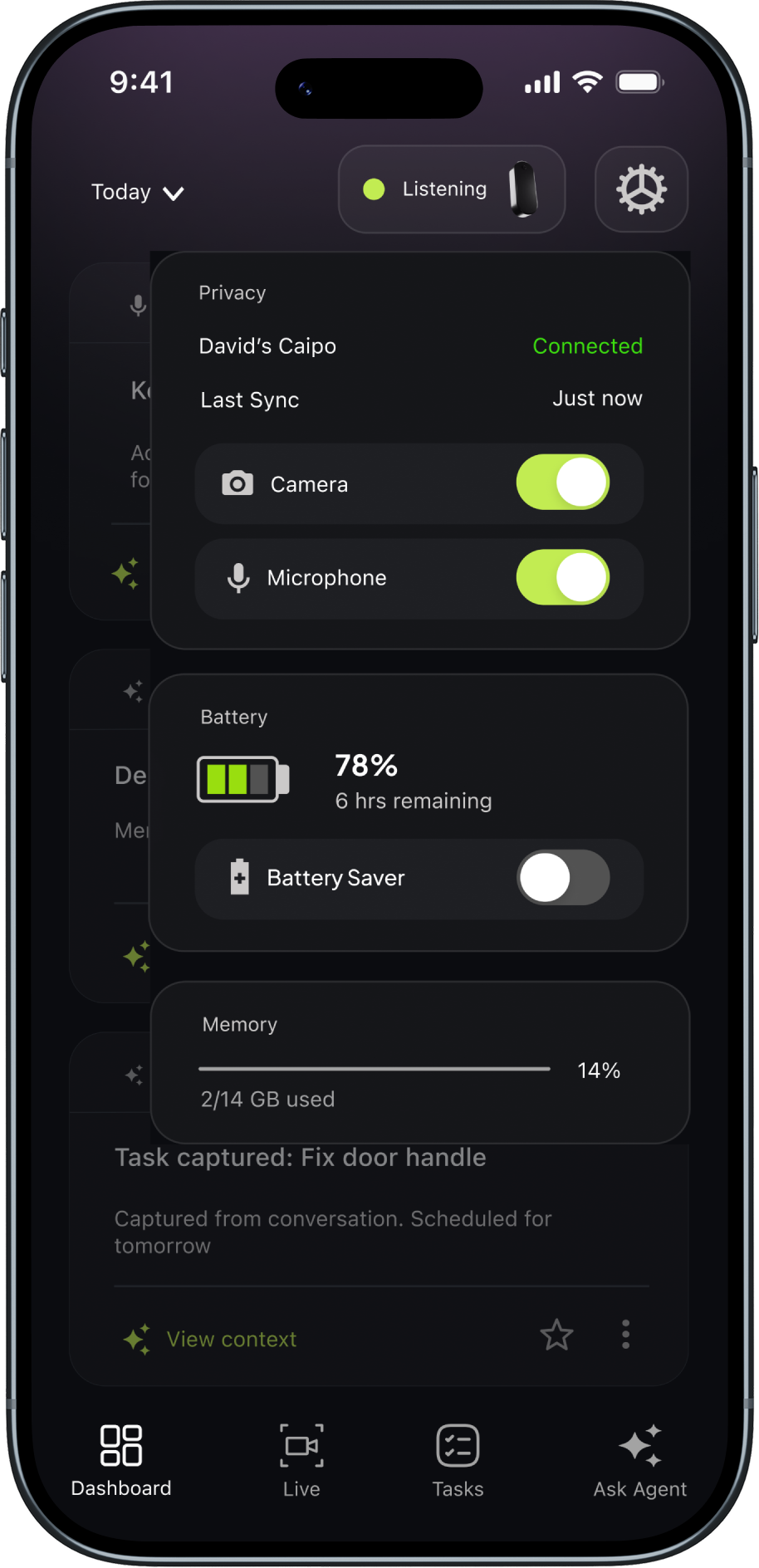

02 · Maintain trustPersistent capture chip 03 · Protect trustControl center

03 · Protect trustControl centerThe change.

Result

0%

opt-in rate

BASELINE WAS 31% · SHIP TARGET WAS 50%

0%

Day 3 retention

0%

Returned after pause

Full Case Study